As mentioned on his excellent video review, I joined my buddy Tyler Stalman on his podcast, the eponymous Tyler Stalman Podcast. On this episode, we discussed Tyler’s sneak preview of the iPhone XR, which just went up for presale overnight here in the States.

Come for the iPhone XR review, but stay for the really wonderful and meandering discussion of being a creator — both podcast and YouTube — in 2018.

I had a blast turning the tables on Tyler for this episode; I suspect you’ll probably enjoy it too.

I’ve spoken about my “go pack” not once, but twice in the past. However, it’s been a couple years, so I figured it’s worth revisiting.

In short, I’ve found it to be incredibly convenient to keep a small bundle of essential and redundant chargers/dongles/doodads in a small pack that can be grabbed at a moment’s notice. If I’m leaving the house to go somewhere overnight, I take my go pack with me. I have 100% confidence that any cable I may need will be in it.

The key to the go pack — convenient both at home and away — is redundancy. I don’t have to go disconnecting my bedside setup every time I go on a trip. I simply grab my go pack.

Some of these cables are not cheap, which makes this a seemingly wasteful endeavor. The convenience of knowing everything I need is waiting for me makes the juice well worth the squeeze.

Essential Items

A — Harry’s Toiletry Bag — $20

I got this as a sponsorship perk/freebie, and though it’s designed for Harry’s

wonderful razors/etc, the Toiletry Bag is actually a wonderful go pack. It’s light,

smushes down if necessary to fit in a corner of your bag, and has two compartments

to store stuff in.

B — Anker PowerPort 5 — $23

I resisted buying one of these for the longest time, but inevitably caved. I’m

glad I did. This small brick will charge both phones, both watches, and one

other USB item simultaneously. Very convenient, especially when traveling

internationally, as you only need one plug adapter for five devices.

C — Apple Lightning Cables × 3 — $19 each

I have actually had good luck with the far better priced $7

Monoprice Lightning cables. However, I have enough first-party Lightning

cables lying around that I’ve just committed to using only those. I landed on

three because I have both Erin’s phone and my phone, as well as my AirPods or

occasionally my iPad.

D — Apple Watch Charging Cable × 2 — $29 each

Erin and I each have an Apple Watch. There are no third-party substitutes that

I trust, even if they do exist. Expensive, but worth it.

E — Cable Matters 6’ USB Extension Cable 2-pack × 3 — $8 each

Our Anker charging brick is in one spot, but the devices it charges

may be across the room from the brick. Erin’s phone, in particular, is often on

the other side of the bed from the power, which lives near my side. I’ll

daisy-chain two of these extensions with a Lightning cable to enable Erin to

leave her phone on her bedside table. Sometimes I need one as well, so I carry

three cables. I’ve searched long and hard for thin USB extension cables, and

someone recommended these to me on Twitter. I can’t find that tweet, but I’m so

thankful for it. The ones linked on Amazon look different, but according to

Amazon, are the same SKU I had purchased.

F — Monoprice Ultra Slim HDMI Cable — $11

I often want to plug in one of my devices — be it a computer or iOS

device — to a hotel TV. It’s often easiest to do so with my own HDMI

cable. These directional cables are as slim as I’ve found, and work great,

as long as you plug them in the right way.

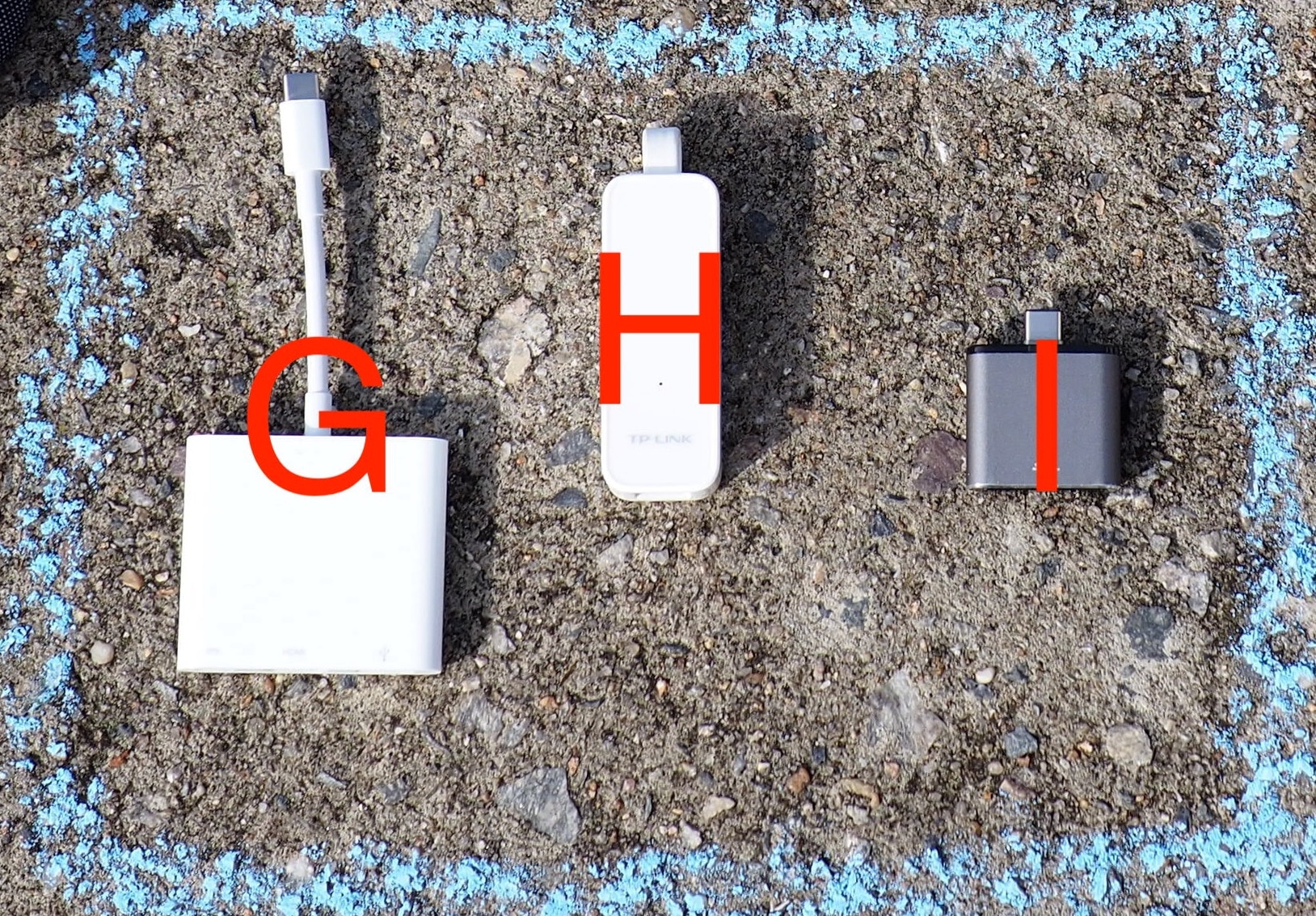

Dongletown

G — Apple USB-C Digital AV Multiport Adapter — $69 (!!)

This ridiculously expensive USB-C dongle gives me USB-C in (for power),

USB-A (for a peripheral), and HDMI out (for using an external TV/monitor).

I’ve tried third party versions, but they seemed less reliable, particularly

with the USB-A port. However, if I were to recommend one, I’d go with the

FastSnail knockoff, which is roughly half the price.

H — TP-Link USB 3.0 to Ethernet — $14

Unfortunately, with the ubiquity of WiFi, hotel rooms very rarely offer Ethernet

ports anymore. However, you never know when you’ll want to transfer a lot of

data, or wish to do so slightly more securely than WiFi allows. Though I’ve

tried some native USB-C adapters in the past, I’ve settled on pairing this

traditional USB adapter with the above Multiport Adapter. This way my one-port

MacBook can have power and Ethernet, rather than one or the other.

Conveniently, the TP-Link adapter requires no drivers to be installed on my Mac.

I — Monoprice USB-C SD Card Reader — $12

I have a “big camera”, and take it with me pretty much anytime I’d also

need to grab my go pack. To read the images on the “film” in that camera, I use

this very-cheap and very-reliable Monoprice reader.

Non-Essential

J — SF Cable 6’ Flat Ethernet Cable — $6

As previously stated, Ethernet ports are very rare these days, but it is

sometimes convenient to have a cable with you. This flat Ethernet cable is

small and light.

K — Apple Lightning Digital AV Adapter — $49 (!)

Speaking of hilariously expensive peripherals (though in this case we

may know why), I still like to carry one of these dongles. It allows

me to connect my iPhone (or iPad) to a TV via HDMI. Since I typically have my

MacBook with me, I use this sparingly, but it is handy in a pinch.

All-in, with the bag, and the non-essential items, the total bill is an eye-popping $340. However, you may find that many of these items you may have lying around. For me, my spend expressly for my go pack was roughly $100 less.

As a final tip, believe in the sanctity of the go pack. Never remove an item from the pack, as you will forget to return it, and then end up on a trip without an essential cable. I made this mistake on a recent beach vacation, and were it not for some good luck and redundant items in the car, I would have been doomed.

Over the last couple of years, I’ve become more cognizant of the feel of the things I put on the internet. It is way too easy to fire off a snarky tweet, or a hot take, about something that isn’t perfect. I’m trying to stop doing that, and also start calling out things that are great.

Sam and Ross Like Things is one of those great things.

Two friends, Sam and Ross, who happen to be not only fellow Richmonders but also friends of mine, talk about two things that they like every fortnight.

Their one rule: No hedging.

I was lucky enough to be their guest this particular fortnight, and though there are a zillion things I could have spoken about, I had to choose only one:

Cook Out. 🤤

I love this show, and I had a blast doing it. Unlike nearly all of my podcast appearances, I was able to do this in-person. Which was critical, since Sam had never had Cook Out before. 😱

Naturally, I came bearing gifts.

Anyway, S&RLT is a great show, that puts a little bit of happiness in the world, every other week. You should definitely check it out.

Often times, the best — but also worst — problem in the world is a difficult choice.

As I write this, 40mm versus 44mm is one of those choices.

Sometimes it’s shaken or stirred.[1].

If you’re very lucky, it’s something else entirely: GTI or R.

Volkswagen offers several different flavors of its all-things-to-all-people Golf line. Many of them I find uninteresting. Two of them are not only interesting, but compelling: the GTI and the R.

How do you choose between them? At first glance, it’s a very difficult choice to make.

Two very powerful, very fast, very comfortable, very good hatchbacks, both of which seem like they should cost more money than they do. It’s a tough choice.

Video

This would not be a Casey on Cars review without a video. You can find my video review of the GTI on my YouTube channel. I’d love if you take a few minutes to watch it:

Too Much of a Good Thing

In the Golf line, there are several different models:

- Golf – The standard economy hatchback

- e-Golf – The super economy hatchback

- GTI – The hot hatchback

- R – The performance hatchback

The space between the models varies depending on which pairing you’re comparing. With the GTI and the Golf R, the differences are slight. Almost imperceptible at a glance.

In my review of the R, I wrote the following:

The Golf R is, on the surface, little more than a GTI cranked to 11. However, upon closer inspection, it’s quite a lot more than that… while also being less than that.

Having spent a week with the GTI, I stand by the above.

Again quoting myself:

At a glance, there are a few major changes from the GTI. The Golf R:

- Has a Haldex all wheel drive system

- Has more power:

- Horsepower is bumped from 220 → 292, a ∆ of 72 HP

- Torque is bumped from 258 → 280, a ∆ of 22 lbft

- Is made in Germany (the GTI is actually assembled in Mexico)

- Has Volkswagen’s new “Digital Cockpit” instead of traditional analog gauges.

- Has an electronic parking brake

As it turns out, there’s a lot more space between the two cars than I originally thought.

Starting Lineup

The particular GTI I was able to spend time with was a Autobahn, which is the highest trim level available on the GTI.

The GTI S is the base model; if you opt for the SE, you get:

- A 8" larger touchscreen (instead of the S’s 6.5")

- Sunroof

- A limited slip differential

- Larger brakes

- Nicer wheels

- LED headlights

- Blind spot monitoring

Opting instead for the Autobahn also gives you:

- Adaptive cruise control

- Dynamic chassis control

- Fender audio

- More configurable driver’s seat

- Leather seats

- Dual-zone climate control

The Autobahn is considered fully loaded; the only options you really get at the Autobahn trim level are exterior color and transmission.

Shifting Gears

The particular GTI Autobahn I was given was equipped with Volkswagen’s direct shift gearbox, which is their dual-clutch automated manual transmission. It’s also a $1100 option. To oversimplify, the DSG is sorta kinda two separate manual transmissions that are interleaved. There are two clutches — one per transmission — which allows for expected shifts to be lightning-fast.

I’ve said for years, that I much prefer DCT/DSG transmissions over traditional automatics. Though I’ll always prefer to row my own, it’s clear that won’t be an option for long. A DCT/DSG is as close as one gets while still having only two pedals.

Or is it?

As discussed in my Giulia review, the ZF 8-speed is truly phenomenal. So good, in fact, that I think I prefer it to the DSG in the GTI. Which is a deeply uncomfortable conclusion I never expected to come to.

The problem with the DSG in the GTI is that it never lets you forget that you are driving a hack. It’s a phenomenally impressive hack, but ultimately, still a hack. It’s taking a manual transmission and trying to bend it to be something it really isn’t. It’s right there in the name: manual transmission! It shouldn’t be automated!

This is most easily exemplified by trying to take off, from a stop, with a moderate quickness. In the ZF, it would behave exactly as I expected: a quick-ish launch, then lots of acceleration. In the GTI, it was different. I got a standard, more leisurely launch, and then once the computer felt like the clutch was done slipping, it would lay on the throttle.

This never felt really natural, and always frustrated me, at least a little bit.

Otherwise, the DSG was great. The only other foible I really noticed was that it allowed you to request a downshift into first gear at speeds where you probably shouldn’t. It was never smooth, to the point that I wish the transmission just ignored my requests. I eventually trained myself not to ask for a downshift to first, but it took me a while.

Traction Liss

Though the DSG left me wanting for the magical ZF 8-speed[2], all in all I liked it a lot. Regardless of the transmission the GTI is equipped with, it was stunningly quick.

I quite expected to dearly miss the speed of the R. I expected the GTI to be noticeably — if not considerably — slower.

It turns out I was right, but not for the reason I expected.

The GTI makes way, way too much power for the front wheels.

Any time I really got on the gas, I got wheelspin. Wet weather? Wheelspin. Dry weather? Wheelspin. Turning? Wheelspin. Straight shot? Wheelspin.

Naturally, this could come down to the Bridgestone tires that the car was equipped with. A friend also has a 2018 GTI Autobahn, but with aftermarket tires, and he swears it’s a totally different car. But he concedes it doesn’t make the traction problems go away; they’re simply decreased.

When the GTI digs in, and connects, it’s nearly as fast as the R. Fast enough that my butt dyno couldn’t really tell much of a difference. However, with almost any steering input, or any amount of moisture on the road, all bets are off.

So, the R is quite a bit faster, but not because of the motor; it’s because of the all wheel drive system.

On the plus side, however, the limited slip differential in the GTI must be powered by unicorn tears, because it was magical. I never felt any torque steer, which I remain surprised by. The last time I drove a truly powerful front wheel drive car — my dad’s SRT-4 — it torque steered like its life depended on it. I got none of that in the GTI.

Value for Money

The GTI has its problems, but one thing I consider to be inarguable: a Volkswagen GTI — in any trim — is incredible value for money. The interior fit and finish feels like a car north of $40,000. The GTI is well equipped, particularly in Autobahn trim. All of this car can be had for $37,065.

However, a base GTI S starts at $26k. That’s already a ridiculous deal, but from what I’ve been told, dealers are willing to make deals on GTIs. It’s not unheard of to get a GTI for around $20k. Brand new.

I can’t imagine a better deal for a car that can do almost everything, and do so with such gusto.

GTI vs. R

When I reviewed the R, I had said that I was looking to unload my BMW, and replace it with either a GTI or an R. Having had a week with the R, then a week with the GTI, I have reached a conclusion.

The GTI is legendary. People have been raving about GTIs since before I was born, and for good reason. I’ve heard from everyone — those in the know and those who are not — that it’s the ultimate all-things-for-all-people car. And everyone is right; the GTI really is. Bang for the buck, value for money, it’s the best I’ve ever driven. By a mile.

However, I hated the GTI.

I hated the GTI because it exposed a second uncomfortable truth: I’m not a particularly good driver. I’m not good at being gentle with steering and throttle input. I’m not good at being… discreet. I like to be able to just plant the gas, turn the wheel, and go.

Thus, a couple of weeks ago, this happened:

We bought ourselves a 2018 Golf R. 6MT, of course.

The GTI is an incredible deal, and a phenomenal car. I would happily drive one. But to spend my money, I wanted to get the car that best suited my driving style. So we got a Golf R.

I couldn’t be happier.

At the end of the day, I put my money where my mouth is, and I did my part to save the manuals. Will you do yours?

This week’s episode of Kathy Campbell’s feel-good podcast about

podcasts, Friends in Your Ears, I join my friend nemesis fremesis

Matt Alexander to discuss how we got into podcasts, why we started making

them, and our favorite podcasts.

Additionally, Matt and I end up dissatisfied with each other’s answers to the bonus question, and decide to take matters into our own hands.

This was tremendous fun to record; I bet you’ll enjoy it.

This week I joined Jason Snell, Stephen Hackett, and fellow guest Carolina Milanesi on Relay’s weekly wrap-up show, Download. Download is designed to give you an overview of the interesting tech stories, all in one easy-to-consume episode. Think of it as a summary version of Subnet.

On this week’s episode, we discussed iPhone and Watch rumors, leaving Facebook, Facebook and Twitter on Capitol Hill, and Evernote circling the drain.

I’m not really sure why I started listening to Free Agents, but I did so from the very first episode.

I suppose it was because it starred two friends of mine, David Sparks and Jason Snell. The content wasn’t relevant to me though, as I would never be bold enough to leave my job.

Well, life finds a way.

Fast forward two years, and a swap from Jason to Mike Schmitz, and I’ve found myself on the most recent Free Agents as a guest.

This was a tremendous honor, and a ton of fun to record. We discussed what it was like to decide to go indie, and how I didn’t learn any of the lessons the prior 53 episodes of Free Agents tried to teach me.

Whether or not you’re interested in going indie, it was a fun conversation; I encourage you to check it out.

I’m a bit behind on my blog posts; I’ve been furiously filming footage for my next episode of Casey on Cars. Speaking of Casey on Cars, I discussed the stars of two forthcoming episodes with Sam and Dan on the most recent episode of their very enjoyable car-themed podcast, Wheel Bearings.

On this episode we discussed what I’ve been driving, but also the land yacht that Dan has fallen in love with, as well as a couple of eco-friendly cars Sam has found himself in. Finally, we end up having an accidental tech podcast at the end. Figures.

If you even slightly enjoyed Neutral, I can’t recommend Wheel Bearings enough.

Today I joined Guilherme Rambo and John Sundell on episode 12 of their great podcast, Stacktrace. Think ATP, but if the three of us really let our developer flags fly.

On this episode, Gui, John, and myself discussed Gui’s spelunking in the most recent iOS 12 beta, CarPlay, Casey on Cars, and going indie. I had a blast talking with the boys, and I think you’ll really enjoy the episode.

In general, Stacktrace is great for not only the investigative reports from Gui, but also the experience and insight from John. I’ve been subscribed since episode one; you should check it out.

Instagram almost always makes me happy. Which is pretty much the opposite of Twitter.

Yesterday on Instagram, I asked this question:

I got a lot of good responses. I’d love some more diverse accounts though, so please reply to my Instagram story with good ones! Here’s some accounts that I’ve checked out and have started following:

- John Kraus

18-year-old that mostly shoots rocket launches and coastal Florida. Recommended by Andy Levy and Victor Lopez III. - Ox

Photos of architecture with punny dad joke captions. Recommended by Brian Renshaw. - Zach Doehler

18-year-old nature photographer with a concentration on color. Recommended by Will Sigmon. - Igor Lipchanskiy

What happens if you photoshop yourself into album covers? Recommended by Keith Irvine. - US Department of the Interior

Beatufiul shots of American nature at its finest. Recommended by Peter. - Rui Gaiola

Really great outdoor and nature photography. Recommended by Pedro Mendes. - Noel Y. C.

A loveletterpostcard to New York City. Recommended by Cian Dowd. - Stephen McMennamy

Take two photos and combine them so they look like one. Recommended by Wesley Forlines.

And finally, since I have your attention:

- Casey Liss

Family, cars, football, and occasionally booze.